Build on the Databricks Data Intelligence Platform

faster, safer, smarter

We design, build, and operate lakehouse-native apps, agents, and analytics using Mosaic AI, Unity Catalog, Delta Sharing, and SQL Warehouses.

The Databricks AI Challenge

Enterprises are eager to scale AI—but face silos, governance bottlenecks, and fragmented tools. Traditional data architectures slow innovation, inflate costs, and block cross-team collaboration.

Closed Architectures & Data Silos

Legacy warehouses and rigid SaaS platforms lock data in proprietary formats, making AI integration complex and expensive. Teams often rebuild pipelines or duplicate data just to experiment with ML.

Governance Complexity & Shadow AI

Without unified governance across data, models, and dashboards, many enterprises face compliance risks, audit gaps, and untracked usage. AI initiatives sprawl in silos—hard to monitor, harder to trust.

Driving Success Across Industries

Empowering Business Transformation Through Databricks Solutions

The Databricks Data Intelligence Platform: Unified Analytics and AI

Unify all your data, analytics, and AI workloads on a single, open lakehouse platform to accelerate innovation and collaboration.

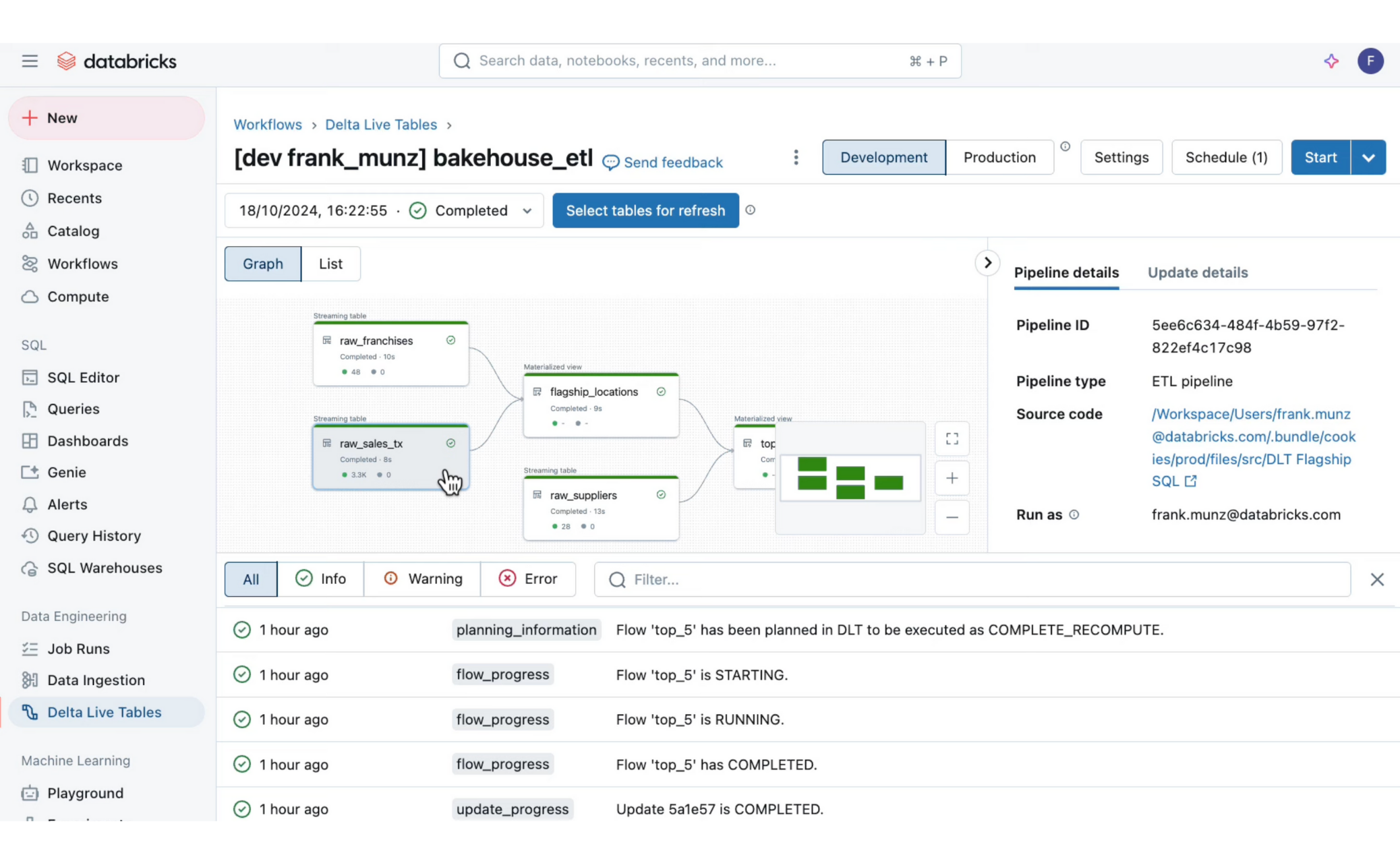

Build Reliable, Scalable, and Automated Data Pipelines

Databricks provides an end-to-end data engineering solution that empowers engineers, analysts, and developers to build and orchestrate high-quality data pipelines for analytics and AI.

Efficient Data Ingestion with Auto Loader

Incrementally and idempotently ingest new data as it arrives in cloud storage, with automatic schema detection to simplify the process.

Unified Batch and Streaming Processing

Use a single set of APIs and a unified storage layer for both batch and real-time data, eliminating the need for separate infrastructures.

Leveraging Databricks Data Intelligence Platform

Unify data, analytics, and AI on a single open lakehouse to accelerate innovation, cut costs, and break silos.

Break Down Silos

Eliminate data fragmentation by consolidating all sources on an open lakehouse, making AI and analytics seamless and scalable.

Govern with Confidence

Apply unified governance for data, models, and dashboards with Unity Catalog—ensuring compliance, security, and trust.

Accelerate AI & Analytics

Streamline pipelines with Delta Lake, Auto Loader, and real-time processing to power faster insights and ML adoption.

Enable Open Collaboration

Share securely across teams and partners with Delta Sharing and Clean Rooms—no duplication, no lock-in.

Why Choose Saasinator?

We combine deep Databricks expertise with industry-specific knowledge to deliver scalable, AI-ready data solutions.

Deep Databricks Ecosystem Mastery

Our teams thoroughly understand the Databricks Lakehouse, Delta Lake, and MLflow, ensuring seamless unification of data, analytics, and AI.

Dedicated Data & AI Expert Crew

We bring experienced consultants who know how to architect pipelines, build advanced ML models, and operationalize AI within your enterprise.

Custom-Built Lakehouse Solutions

Unlike off-the-shelf platforms, we design Databricks solutions tailored to your unique data strategy, workflows, and business outcomes.

Databricks Modules Enhanced with AI

Explore the cloud modules and where AI copilots, machine learning, and automation

create measurable impact.

Saasinator — Unified Databricks Capability Model

A clean three-circle Venn: minimal labels in the graphic; details on the right. The center is highlighted to emphasize Databricks.

- Data Engineering Excellence

- Reliable pipelines, real-time ingestion, and scalable processing with Delta Lake and Auto Loader.

- AI & Machine Learning Innovation

- Unified model training, ML lifecycle management, and accelerated experimentation.

- Governance & Collaboration

- End-to-end governance with Unity Catalog, plus secure data sharing and clean room collaboration.

- Intersection (highlighted): Saasinator

- A unified lakehouse platform that blends engineering, AI, and governance to break silos, cut costs, and accelerate business transformation.

Our AI Solutions for Databricks

Comprehensive services to transform your data, analytics, and AI with Databricks.

Databricks AI Discovery Workshop

1–2 week engagement to identify high-impact AI and data opportunities within your Databricks Lakehouse environment with clear ROI projections.

Databricks AI Enablement Consulting

Strategic advisory to align AI and ML adoption with business goals, while building internal data science and engineering capabilities.

Data & AI Portfolio Rationalization

Comprehensive audit of your data and analytics stack to identify redundant tools and highlight high-ROI Databricks replacement opportunities.

Custom AI & ML App Design

Full lifecycle development of bespoke AI/ML models and applications that leverage Databricks’ Lakehouse, Delta Lake, and MLflow.

Managed AI Services

Ongoing support, optimization, and strategic iteration for deployed AI solutions.

Managed Databricks AI Services

Ongoing support, optimization, and strategic iteration for deployed Databricks AI solutions, ensuring continuous value delivery.

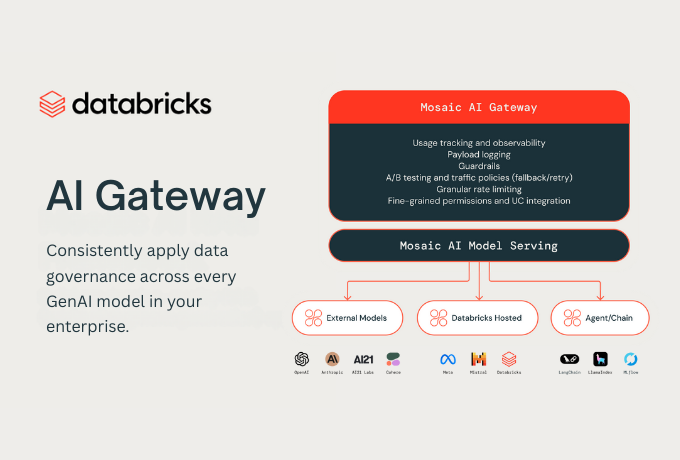

Mosaic AI — Agents, Evals & Models

Design agentic systems with tool use, memory, and guardrails; evaluate with AI judges; ship to production with Databricks serverless endpoints and gateways. Use DBRX or bring your own models.

Agent Stack

Multi-tool agents, retrieval, and function calling with built-in evaluation and observability for quality and safety.

Evals & Gateway

Automate offline and live evals, route through AI Gateway, and enforce policies — all governed by Unity Catalog.

Models incl. DBRX

Adopt open, efficient MoE models like DBRX for state-of-the-art performance, or integrate 3rd-party / proprietary LLMs.

Common Agent Use Cases

Customer support copilots and auto-resolution

Financial planning & forecasting assistants

Document Q&A with retrieval on Unity-governed assets

SQL generation and analytics copilots

Delivery Accelerators

Eval harness

AI judges, prompts, and test sets

Serving

Serverless endpoints, caching

Safety

Prompt/PII checks + approvals

Observability

Cost, latency, success rates

Unity Catalog — Intelligent Governance

A single pane to discover, govern, and monitor data, models, metrics, dashboards, and agents. Enforce fine-grained access, track lineage, and surface quality signals.

Catalog all assets

Tables, files, ML models, prompts, dashboards, and metrics in one governed catalog.

Lineage & quality

End-to-end lineage and intelligent quality signals to assess trust and usage.

Fine-grained controls

Row/column filters, tokenization, attribute-based policies, and approval workflows.

Our AI Delivery Squad

A cross-functional team of experts working together to design, build, and optimize Databricks solutions that unlock the power of data, analytics, and AI for your business.

Lakehouse Architects

Specialists in the Databricks Lakehouse who know how to unify data, analytics, and AI on a single platform.

Data Engineers

Builders who design pipelines, manage ETL/ELT processes, and ensure smooth data integration across structured and unstructured sources.

Business Domain Developers

Technologists who understand both code and your industry’s unique requirements to customize Databricks solutions.

Data Scientists & ML Engineers

Experts who apply advanced analytics, machine learning, and AI models to transform data into predictive insights and innovation.

Domain SMEs

Subject matter experts with deep process and industry knowledge to guide Databricks implementation and ensure relevance.

UX & Human-Centric Designers

Designers ensuring Databricks solutions, dashboards, and applications are intuitive, accessible, and user-friendly.

Subscribe to Databricks Insights & Updates

Actionable insights on data + AI, success stories, and platform innovations — straight to your inbox.

From Discovery to Production

A pragmatic, outcome-oriented program that de-risks your first workloads.

Week 1-2: Discovery

Inventory data sources, map use cases, quantify value; define success metrics and guardrails.

Week 3-4: Design

Architect lakehouse zones, catalog strategy, eval plans; choose serving patterns and SLAs.

Weeks 5–8 — Build

Implement pipelines, models/agents, governance; set up Workflows & DAB CI/CD; run evals.

Weeks 9–10 — Deploy

Progressive rollout, dashboards, training, and production SLOs with observability.

Ongoing — Optimize

Tune cost and performance; expand catalog coverage and sharing; iterate agents with eval feedback.

Our Commitment to ROI

Our Databricks solutions are designed to maximize measurable ROI and process efficiency. We help clients

Rebuild Only What Matters

Focus on high-impact data and analytics areas where the Databricks Lakehouse delivers the greatest value avoiding unnecessary complexity.

Cut Data Waste

Eliminate redundant data silos and inefficient pipelines to reduce total cost of ownership and unlock unified insights.

Boost Automation

Deploy intelligent workflows, from automated ETL to ML model management, freeing teams for higher-value innovation.

Demonstrate Clear Value

Track transparent KPIs that prove tangible business benefits from Databricks investments—making impact visible to every stakeholder.

Insights

Insights on AI-native apps, SaaS rationalization, and the future of enterprise software.

AI Pilots that Prove ROI in 2 Weeks: The Saasinator Approach

Breaking Digital Debt: Rebuilding Enterprise Workflows with AI

Why Join?

Real Databricks + AI Playbooks

Get hands-on guides pulled from real lakehouse builds — not theory.

Fast to Impact

Launch pilots in weeks, not months — with Databricks-native tools.

Privacy-First, No Noise

No spam. No list selling. GDPR-safe and signal-only content.

Tailored to Your Role

Focused insights for Data, AI, IT, and Analytics leaders — zero fluff.

Get your Databricks AI roadmap

We’ll map 3–5 high-value AI use cases for your Fusion stack.